AI-driven Conditions

In BPMN 2.0, Conditional Gateways are used to route a process along different paths based on a condition. Traditionally these conditions are simple boolean expressions evaluated against process data. Dynamixs.AI takes this a step further — with the `ConditionalAIAdapter`, routing decisions can be delegated entirely to a language model.

This means your process can make intelligent, context-aware decisions without hardcoded rules — based on natural language evaluation of the current business data.

How It Works

The ConditionalAIAdapter listens for BPMN condition evaluation events and intercepts them before they are resolved. Instead of evaluating a boolean expression, it sends the condition as a natural language prompt to the configured LLM and interprets the response as the routing result.

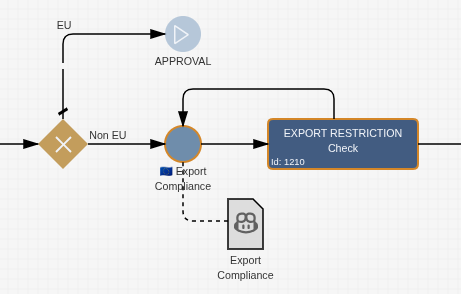

A typical use case: a process receives an order and needs to decide whether it goes to a standard or a customs-handling path. Instead of maintaining a hardcoded list of countries, you simply ask the LLM:

“Does this shipment go to a non-EU country?”

The model evaluates the question against the current process data and returns a result that drives the gateway decision — no rule maintenance required.

Configuration

The ConditionalAIAdapter is configured in the Workflow Result of the BPMN Event using name="CONDITION". The configuration only requires the user prompt — the system role and response format are handled automatically by the adapter:

<imixs-ai name="CONDITION">

<endpoint>http://openai-api-server/</endpoint>

<result-item>my.condition</result-item>

<prompt>Is Germany an EU member country?</prompt>

<debug>true</debug>

</imixs-ai>

| Property | Type | Description |

|---|---|---|

endpoint |

URL | The REST API endpoint of your OpenAI-compatible LLM service |

result-item |

Text | The process variable where the condition result is stored |

prompt |

Text | The natural language condition to evaluate |

debug |

Boolean | Optional — prints processing details to the log |

The result-item field stores the evaluated condition result on the process instance, where it can be referenced by the outgoing sequence flows of the gateway.

Dynamic Prompts

Just like with the OpenAIAPIAdapter, the condition prompt can reference live process data using <itemvalue> placeholders:

<imixs-ai name="CONDITION">

<endpoint>http://openai-api-server/</endpoint>

<result-item>shipment.condition</result-item>

<prompt>Does the shipment with destination '<itemvalue>order.country</itemvalue>' go to a non-EU country?</prompt>

</imixs-ai>

At runtime, the placeholder is replaced with the actual field value from the process instance before the prompt is sent to the LLM.

Caching

The ConditionalAIAdapter includes a built-in caching mechanism. For each evaluation, it computes a hash of the prompt and stores it alongside the result in <result-item>.hash. If the same prompt is encountered again within the same processing cycle — for example when a gateway is re-evaluated — the cached result is returned immediately without making a second LLM call.

This prevents redundant API calls and ensures consistent results within a single processing life cycle.